Kun Duan, Dhruv Batra, and David Crandall

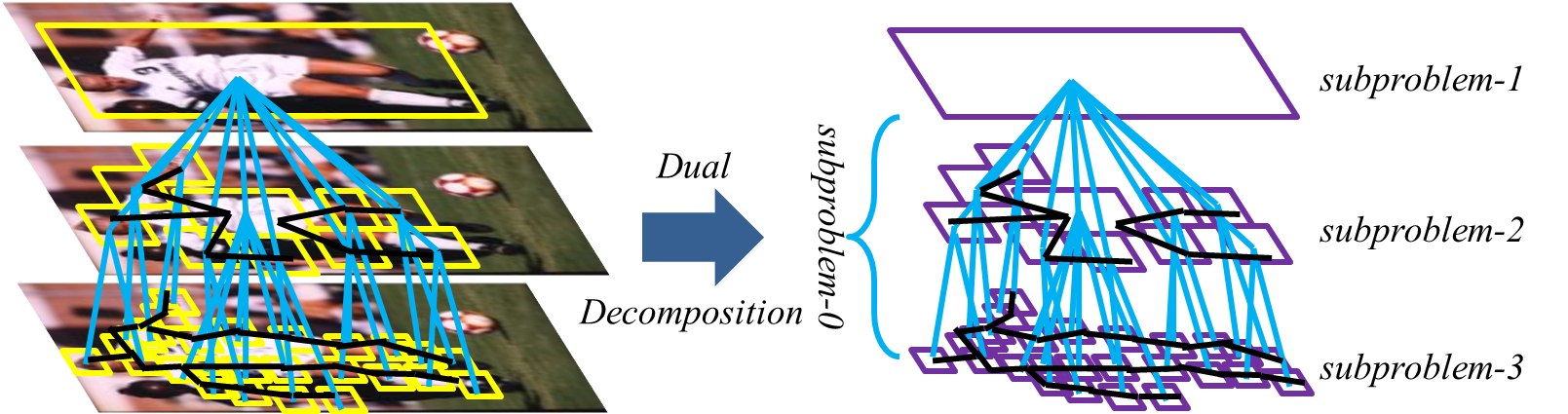

We propose a new approach for part-based human pose estimation using multi-layer composite models, in which each layer is a tree-structured pictorial structure that models pose at a different scale and with a different graphical structure. At the highest level, the submodel acts as a person detector, while at the lowest level, the body is decomposed into a collection of many local parts. Edges between adjacent layers of the composite model encode cross-model constraints. This multi-layer composite model is able to relax the independence assumptions of traditional tree-structured pictorial-structure models while permitting efficient inference using dual-decomposition. We propose an optimization procedure for joint learning of the entire composite model. Our approach outperforms the state-of-the-art on the challenging Parse and UIUC Sport datasets.

Figure 1: Illustration of our multi-layer composite part-based model.

[papersandpresentations proj=recognition:pose]